AI and Army astronauts: A judge advocate’s solution to protecting the soldier-astronautby Mitch Y. Topaloglu

|

| Due to the dual tyrannies of distance and data, a decentralized AI model in the form of Federated Learning (FL) can be, and has been used, in space for astronaut safety and privacy. |

With renewed attention being placed on the space program under the new Artemis campaign, the Army must be aware that more soldiers will be called upon to serve, whether on the International Space Station (ISS), a permanent lunar outpost, or eventually, a mission to Mars. The well-being of these soldiers is crucial for the success of their mission to explore and advance humanity. Unfortunately, for the first time in 65 years of spaceflight, NASA was forced to evacuate an astronaut of Crew-11 in January of 2026 due to an undisclosed health emergency.

Imagine if, instead of only being 400 kilometers away from Earth on the ISS, an astronaut had been 400,000 kilometers away on the Moon. Or imagine if the crew of a mission to Mars experienced a medical emergency in January 2026 when Mars was 400 million kilometers away. Those astronauts would have been stuck on the other end of a solar conjunction, out of contact with the Earth.

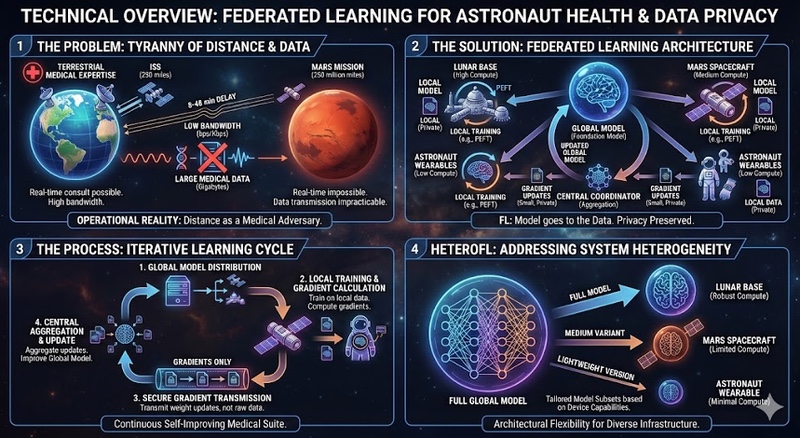

Medical evacuation would become impracticable if not impossible altogether. NASA has already begun employing AI healthcare tools for diagnosis and treatment. For the soldier-astronaut, there is a permanent concern of data privacy to protect sensitive health information from adversaries. Here, due to the dual tyrannies of distance and data, a decentralized AI model in the form of Federated Learning (FL) can be, and has been used, in space for astronaut safety and privacy as seen in frame 1 of Figure 2 below.

The physics of space operations create medical challenges that have no terrestrial equivalent. A future lunar base would require a three-day transit. Mars missions face greater isolation, being an odyssey of approximately seven to ten months from Earth, depending on orbital positions in ideal conditions. Round-trip communications alone consume up to 40 minutes, making real-time medical intervention impossible.

Bandwidth constraints compound this tyranny of distance. NASA can currently return 50 megabits per second (Mbps) to a lunar base under ideal conditions, but Mars missions operate at far lower (about 3.1 Mpbs) data rates. In extreme cases, during a solar conjunction, there can be a total blackout of communications to Mars. A single human genome comprises three billion base pairs, requiring gigabytes of storage; continuous biometric monitoring generates gigabytes daily. Transmitting this information to Walter Reed becomes practically impossible.

| Feature | ISS | Potential Lunar Base | Future Mars Outpost |

|---|---|---|---|

| Standard Bandwidth | 600 Mbps [1] | 50 Mbps [2] | 3.1 Mbps [3] |

| One-Way Latency | <1 second [6] | ~3 – 14 seconds [4] | 3 to 22 minutes [3] |

| Blackout Risk | Near-zero | Low (Lunar Occultation) | Periodic (Solar Conjunction) [5] |

The Crew-11 incident illustrates the vulnerability of reliance on Earth-side medical expertise. A medical condition could not be adequately resolved in orbit, forcing mission abort. The crew on the ISS reportedly used an ultrasound to aid in diagnosis. Given the latency and bandwidth realities of Mars, transmitting ultrasound data to ground control is a suboptimal solution. Ideally, the crew would employ AI for diagnostic or treatment support. While a centralized AI trained entirely on Earth could provide a framework, AI models require relevant data to provide relevant results. Put simply, the AI cannot fix what it does not know.

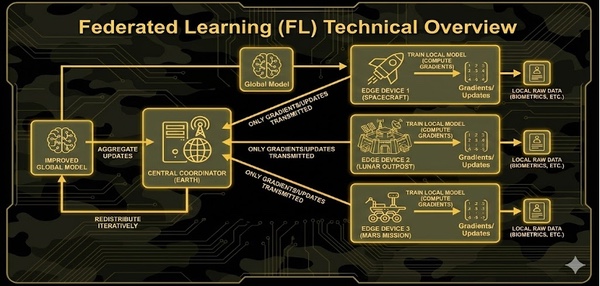

NASA has employed AI for years. By contrast, FL has seen recent attention due to its versatility and bandwidth limitations of space to Earth communication. FL is a distributed machine learning paradigm that inverts the data-to-model relationship. Historically, raw data is sent to a model for training. In FL, the model travels to data.

FL enables the AI to learn and adapt using real-time medical data without needing to transmit sensitive, high-volume information back to Earth. In a solar conjunction scenario on Mars, an FL-enabled system allows the spacecraft to remain a “learning node.” It can refine diagnostic algorithms locally, ensuring that the AI’s “medical knowledge” is tailored to the specific, evolving health profiles of those specific astronauts. By moving the model to the data, FL creates a self-improving medical suite that can function independently of Earth, effectively turning the spacecraft from a mere transport into a limited autonomous medical center.

FL radically updates the data-to-model framework:

Figure 1. A diagram of federated learning architecture proposal in space operations |

Space medical systems face unique challenges that standard FL hasn’t addressed. A lunar base might have robust computing resources, while a small spacecraft has minimal capability. Traditional FL assumes all nodes share the same computing capacity, but this assumption fails in space with vastly different hardware capabilities, as shown in frame 2 of Figure 2.

| Federated Learning enables the AI to learn and adapt using real-time medical data without needing to transmit sensitive, high-volume information back to Earth. |

HeteroFL is a federated learning framework that addresses nodes equipped with very different computation and communication capabilities. In essence, not all space systems are created equal, but all systems can still contribute to a PEFT.

In practice, HeteroFL permits a lunar base to train on a full-complexity model, a spacecraft to train on a medium-complexity variant, and astronaut wearables to train on lightweight versions. All models can contribute to the same global medical AI as seen in frame 4 of Figure 2. Each system trains the portion of the model it can support, transmits gradients based on its bandwidth, and receives model updates scaled to its capabilities.

Figure 2: A proposed solution for soldier-astronaut privacy. Frame 1: Describes the bandwidth bottleneck. Frame 2: An overview of the levels of FL in space. Frame 3: The iterative nature of FL. Frame 4. HeteroFL, built around different scales. |

The Privacy Act establishes that federal agencies may not disclose records contained in a “System of Records” without written consent, subject to specific exceptions. A System of Records is any group of records under agency control from which information is retrieved by personal identifier. Any centralized database aggregating health records from multiple soldier-astronauts constitutes a System of Records, triggering comprehensive notice requirements and severely restricting disclosure. Each willful unauthorized access constitutes a separate violation, exposing the United States to penalties of actual damages plus fees.

DoD Instruction 6025.18 implements HIPAA privacy standards within the Military Health System. Central to this instruction is the “Minimum Necessary” standard: covered entities must limit uses and disclosures of Protected Health Information (PHI) to the minimum necessary to accomplish the intended purpose. When multiple technical approaches exist to accomplish a mission, the legal mandate is to select the approach that minimizes PHI collection, use, and disclosure.

Traditional centralized training requires collecting, transmitting, and storing complete medical records. This maximizes data collection but creates tension with the Minimum Necessary standard. FL accomplishes the medical AI role while transmitting only mathematical gradients, which are not individually identifiable health information. When two architectural approaches accomplish the same mission but one requires transmitting PHI while the other transmits only gradients, the Minimum Necessary standard prioritizes selecting the latter.

The Health Information Technology for Economic and Clinical Health (HITECH) Act strengthened HIPAA’s enforcement mechanisms and imposed additional requirements on HIPAA’s covered entities. HITECH’s most consequential provision for AI deployment is its breach notification requirement. Unauthorized acquisition, access, use, or disclosure of PHI that compromises its security or privacy constitutes a breach requiring notification to affected individuals, the Department of Health and Human Services, and in cases affecting more than 500 individuals, public media notification.

At first glance, it might seem that this is a premature concern. There have only been about 400 astronauts so far in the history of NASA. While the amount of Army astronauts is a far cry from the 500 required to trigger the public media notification now, a rapid growth of the corps of soldier-astronauts may be necessary within the next decade. If the United States seeks to compete with China in a new space race, it will need many more astronauts to do so.

| As we expand into the solar system, maintaining Earth-dependent oversight becomes untenable. Artificial intelligence offers the only viable path forward that can coexist within the legal framework of today. |

For centralized medical systems, a single security compromise could then expose the complete medical histories of hundreds of astronauts, triggering mass breach notification. The consequences extend beyond administrative inconvenience. Adversaries obtaining comprehensive health profiles of soldier-astronauts gain intelligence for targeting, exploitation, and psychological operations. HITECH also mandated security risk assessments and imposed enhanced penalties, with civil monetary penalties reaching $1.5 million per violation category per year for willful neglect.

Federated Learning may be the ideal AI architecture that satisfies DoDI 6025.18’s Minimum Necessary standard for space-based medical applications. The mission is clear: deploy decentralized medical AI capable of autonomous diagnosis. Centralized models require collecting, transmitting, and storing complete medical records. This maximizes data collection to the detriment of Minimum Necessary. FL accomplishes the mission while transmitting only gradients, which are not individually identifiable health information.

FL avoids creating centralized targets. Medical records remain distributed across their points of collection. No central vault contains all records. The central coordinator possesses only the global model and aggregated gradients, which cannot be reverse engineered to reconstruct individual patient data when secure aggregation techniques are employed.

This architectural feature has legal significance across multiple frameworks. First, if no central database retrieves information by personal identifier, there may be no System of Records triggering Privacy Act obligations. Second, from a HITECH Act perspective, FL dramatically reduces breach risk exposure. A compromise of a single edge node affects only that node’s crew, potentially as small as a handful of individuals rather than the entire astronaut corps. This proportionate risk profile means that even in worst-case security failures, the breach notification burden remains manageable and the intelligence value to adversaries remains limited.

AR 40-66’s role-based access requirements align naturally with FL’s distributed architecture. The Flight Surgeon or medical officer responsible for a crew retains complete control over their patients’ records. The local medical AI trains on this data under their authority as part of providing care. Central command, data scientists, and system administrators never gain access to individual patient records. They interact only with the global model.

The future of medical care demands decentralized edge computing at each spacecraft and habitat module. Edge nodes continuously train on local data to improve predictive accuracy for the specific crew. Periodically, based on bandwidth availability, nodes compute gradients using secure aggregation protocols that prevent individual gradient inspection. A ground-based coordinator receives encrypted gradients, aggregates them without inspecting individual contributions, and produces an updated global model. The improved model is distributed back to edge nodes, providing each crew with the benefit of collective learning without compromising individual privacy.

HeteroFL enhances this architecture by allowing each edge node to train models sized appropriately for its hardware—full neural networks on robust habitat systems, medium-sized models on spacecraft, lightweight versions on wearables. All contribute to the same global model, maximizing both privacy protection and operational capability across the solar system.

The explainability challenge exists: when a federated model makes a diagnostic error, investigating the failure becomes more complex than centralized architectures. However, local training data remains available at edge nodes for audit purposes. Importantly, technical audit challenges do not create statutory liability in the same way that Privacy Act violations do. Data poisoning risks exist if an adversary compromises an edge node or sensor system. However, these are technical security challenges, not privacy or legal compliance issues. Technical risks can be mitigated through modern advances in Computer Science. Privacy violations expose the Army to civil liability, Congressional oversight, and potentially to adversary manipulation.

First, when technically feasible, centralized AI approaches to medical applications may constitute violations of DoDI 6025.18’s Minimum Necessary standard. Judge Advocates (JA) should proactively ask vendors whether privacy-preserving alternatives were considered.

Second, Privacy Impact Assessments should explicitly evaluate federated architectures in their alternatives analysis and address HITECH-inspired considerations, including breach risk exposure and the intelligence value centralized databases present to adversaries.

Third, the Pentagon should develop template Model Sharing Agreements and legal guidance formally classifying gradient updates as non-PHI to facilitate rapid capability sharing across commands.

Fourth, JA’s should work with medical authorities to establish clear documentation distinguishing QA activities from human subjects research when deploying FL systems, emphasizing the continuous improvement nature of FL and the primacy of patient care over knowledge generation.

Fifth, establish annual reviews of operational FL deployments comparing actual privacy performance against projections. As privacy-preserving technologies evolve (e.g., homomorphic encryption, improved differential privacy, etc.) the Pentagon must periodically reassess whether current architectures remain optimal or whether newer techniques should replace existing systems. The goal is to achieve continuous improvement in privacy protection while maintaining operational capability.

The Crew-11 incident demonstrates that privacy-protective data silos in space operations carry operational costs. As the Department of War expands into the solar system, maintaining Earth-dependent oversight becomes untenable. Artificial intelligence offers the only viable path forward that can coexist within the legal framework of today.

Federated Learning provides a legal strategy that aligns AI capability with privacy protection. For JA’s, when advising on medical AI for space operations, privacy-preserving architectures are not optional enhancements; they may be legally mandated by existing regulations, operationally necessary given bandwidth constraints, and ethically required to protect soldiers who forge the republic’s future in the Army’s most extreme environment yet.

Note: we are now moderating comments. There will be a delay in posting comments and no guarantee that all submitted comments will be posted.